If only it were as simple as the popular ‘XYZ’ Antivirus (AV) software solution is the best antivirus software and outperforms all the rest. But, unfortunately, sometimes marketing can be deceiving. As expected, popularity and quality do not go hand in hand with most AVs. As a result, there has been poor progress in AV solutions. But how did we come to this conclusion? Are we just being biased as Pen Testers? The results were obtained using VirusTotal to get a benchmark for how different AV solutions react to malware before and after some simple changes were made.

Some of the most recognised AV solutions fail to demonstrate any ability to discover malware that has gone through simple bypass steps. This means attackers can bypass these AVs with minimal skill and effort. However, where some fall, others rise to the challenge. Some AV solutions were commonly discovered or highlighted our malware samples before it became “known” among them that these files were malicious.

Your Antivirus may not be detecting malware

How on earth did we get here? This is a question most of us ask ourselves from time to time, but the end result was not the intended goal. Initially, the goal was to bypass one specific AV for an upcoming Security Penetration Testing client engagement. This AV was one of many “well known” but failed to impress when put to the test. Therefore, part of the goal here was to use as little effort as possible to bypass the AV.

In doing this, VirusTotal was used to get an idea if our executables would be seen as innocent to the AV in question. When this was done, we noticed a few AVs that also dropped their shield – far too easily. These results inspired us to demonstrate to you how your AV probably sucks. However, this does not mean you will not detect malicious behaviour through other means such as abnormal behaviour of users but will show you how reliable your AV is at detecting threats. VirusTotal is being used to demonstrate the difference here, as it tests the results of many AV solutions, so it is a good way to test a sample against many AVs.

Our baseline, goal and measurement for security testing

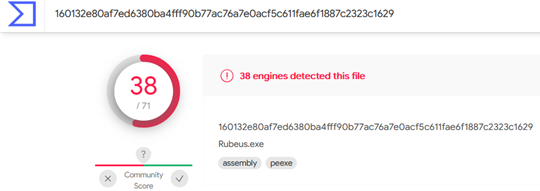

To know how far we have come, we needed a starting line to be able to measure it. For this, we used VirusTotal and a default Rubeus binary (https://github.com/GhostPack/Rubeus). After compiling Rubeus and making 0 changes to the code, we uploaded it to VirusTotal and found that 38/71 AVs picked it up as malicious at the time.

Within 2 weeks, this had increased to 43, suggesting that others became aware of the malware because of data being shared. Considering that Rubeus has been around for over 3 years, these results are not promising to see. However, this gives us a baseline for what to beat.

First testing methodology used

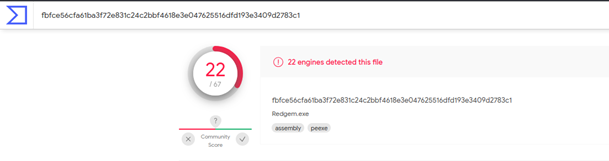

The first method used was to simply replace known words and strings within the code to be less suspicious and so making it harder to detect through strings. Over a dozen strings were changed, such as “Rubeus” and “Kerberoast” which had no place being in an innocent looking binary. After several run-throughs making sure the code still worked and all potentially suspicious strings have been removed, it was uploaded again to VirusTotal under the name “Redgem”.

As seen above, the initial detection rate was 22/67, meaning the detection rate for string changes alone was around 40% lower (16/38). The detection rate within 2 weeks was 38/69, suggesting other AVs became aware because it was uploaded online. The practice of changing strings can be automated and made very quick so relying on this method will at best lead to a lag in the ability to detect malicious binaries.

The results did suggest that the majority of AV vendors did not know how to detect Rubeus running on the system. The repercussions of this are attackers having easy methods to bypass common AVs making them inadequate for protecting your network.

Exploiting user accounts

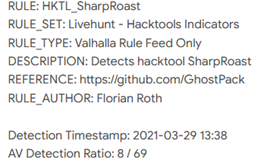

Despite the big advantage of using string replacement with Rubeus, there were still TOO many AVs detecting our binary, so we decided to simplify the functionality. We used a tool called SharpRoast (https://github.com/GhostPack/SharpRoast) to exploit user accounts with SPNs to see if the amount of code was reduced, would make it harder to detect.

During the initial test, 8/70 AVs detected it as seen above with one of them being Microsoft Defender. This works to our advantage as we can use a tool called DefenderCheck. (https://github.com/matterpreter/DefenderCheck) to see what is being picked up as malicious.

By doing so, the tool did not get picked up in Defender and it was possible to extract a Kerberos hash in a test AD environment. This variant was not uploaded but based on the test against Defender, it was able to run without being quarantined. Mixing simplifying programs to the essentials and string replacement suggests that Rubeus could be broken up into several smaller programs for different functions to appear to be a lot less malicious.

Antivirus solutions – lessons learned

Despite the sample of malware used here being unrepresentative of the majority, the results here indicate that we all need to research more carefully on which solutions work best for what we need. Budget aside for a moment, resources such as VirusTotal can be used to perceive which AV solutions identify the threats regularly seen.

This does not mean you will get 100% detection, but the more malicious files our AVs detect, the better. Using this in a defence in depth approach can greatly help reduce what threats make it through your door. It should also be noted that the best solution may not be the most expensive. The evidence we saw suggested that cost alone is not a good indicator of what the best AV will be for you.